RAG: The Antidote to AI Hallucinations in Enterprise Systems

The Hallucination Problem

Large Language Models are powerful, but they have a critical flaw: they can confidently generate plausible-sounding information that is completely false. This phenomenon, known as "hallucination," is the number one barrier to enterprise AI adoption. When a model hallucinates, it doesn't just provide wrong information—it presents it with absolute certainty, making it nearly impossible for users to detect the error without domain expertise.

Imagine deploying an AI assistant to your customer service team, only to have it confidently tell a customer that your product has features it doesn't have, or worse, provide incorrect pricing information. The damage to trust and reputation can be catastrophic.

Why Hallucinations Happen

LLMs generate text by predicting the next most likely token based on patterns in their training data. They don't actually "know" facts; they predict what words should come next. When a model encounters a query about something outside its training data, or when it's asked to reason about specific details, it fills in gaps with plausible-sounding but fabricated information.

This is particularly dangerous in enterprise contexts where accuracy is non-negotiable. A legal AI assistant that hallucinates case law. A medical AI that invents drug interactions. A financial AI that makes up regulatory requirements. These aren't theoretical problems—they're real risks that organizations face today.

The RAG Solution

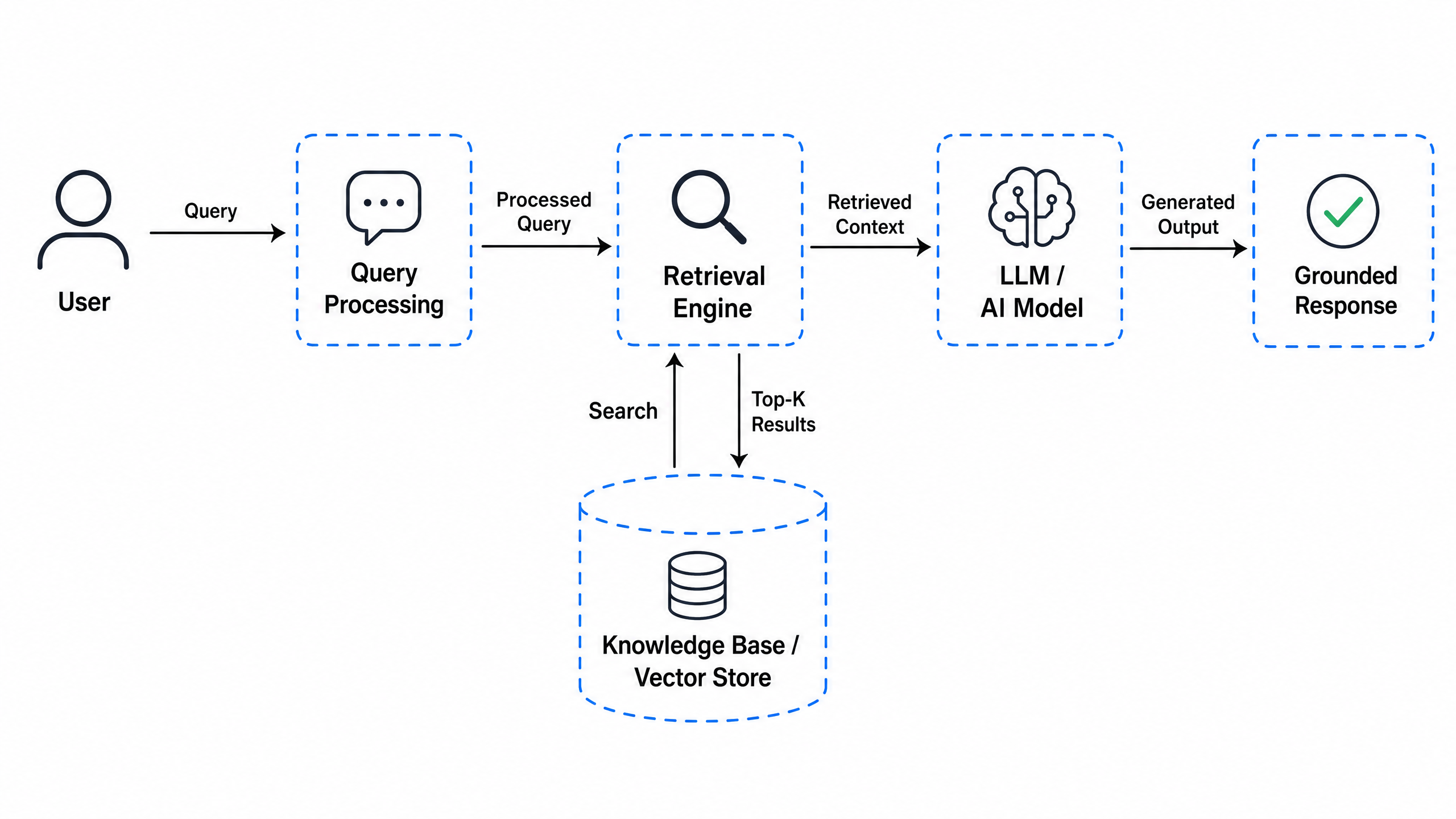

Retrieval-Augmented Generation (RAG) is the most effective solution to the hallucination problem. Instead of relying solely on the model's training data, RAG grounds the model's responses in actual, verified information from your organization's data sources.

Here's how it works:

The key insight is simple: if you provide the model with the right information, it's much harder for it to hallucinate. The model becomes a sophisticated tool for synthesizing and presenting information you've already verified, rather than a source of truth itself.

Real-World Impact

Organizations implementing RAG have seen dramatic improvements:

- Accuracy: Reduction in factually incorrect responses from 30-40% to under 5%

- Trust: Users gain confidence in AI-generated content because it's verifiable

- Compliance: Easier to audit and trace where information came from

- Customization: The AI learns your specific terminology, policies, and procedures

Implementation Considerations

Implementing RAG effectively requires more than just connecting an LLM to a database. You need to consider:

Data Quality: Your retrieval system is only as good as the data it searches. Ensure your knowledge base is well-organized, up-to-date, and properly indexed.

Retrieval Strategy: Not all information is equally relevant. Sophisticated retrieval mechanisms (semantic search, hybrid search, re-ranking) ensure the most relevant context is provided to the model.

Prompt Engineering: How you structure the prompt matters. The model needs clear instructions on how to use the retrieved information and what to do if the information doesn't fully answer the question.

Evaluation: Implement continuous monitoring to catch hallucinations that slip through. Track accuracy metrics and user feedback.

The Future of Enterprise AI

RAG represents a fundamental shift in how we think about AI in enterprise contexts. Rather than asking "Can we build an AI that knows everything?", we're asking "Can we build an AI that reliably uses what we know?"

This is a much more achievable goal, and it's the path to AI systems that organizations can trust and deploy with confidence.

Ready to eliminate hallucinations from your AI systems? Talk to us about implementing RAG for your enterprise data.

Ready to get started?

Let's discuss how Infonex can help accelerate your AI initiatives.